- back

Solutions

Unlock your business transformation with our smart IT infrastructure services and solutions.

Technology Consulting & Leadership

IT Services Outsourcing

Cybersecurity & Compliance

AI & Automation

Data & Analytics

- back

Industries

Ensure your unique data and process requirements are being met with IT solutions built on deep domain experience and expertise.

- back

Company

At Coretelligent, we’re redefining the essence of IT services to emphasize true partnership and business alignment.

- back

Insights

Get our perspective on the connections between technology and business and how they affect you.

- back

Our Locations

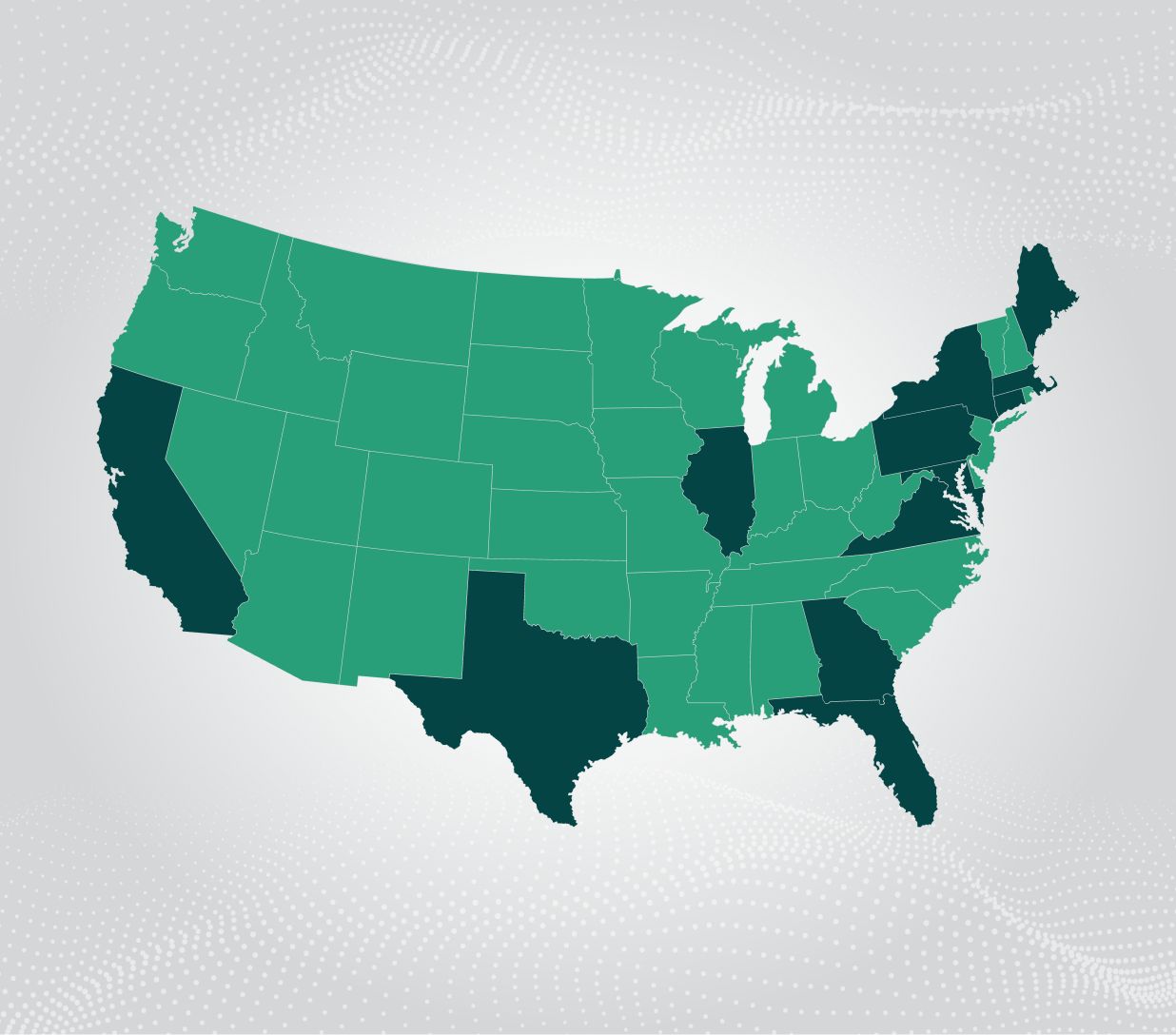

Explore our office locations and how we deliver seamless support across all your sites.