A firm recently faced an incident that shaped a critical conversation. An employee, working through an AI tool, gained access to privileged payroll data. The data was real. The access was unintended. The governance to prevent it had not been put in place before the AI tool was deployed.

The instinct in moments like this is to tighten access controls. That instinct is correct, and the work matters. However, a deeper question often gets missed.

Even if access controls had been correctly scoped, what would have determined whether the AI’s output was appropriate to deliver? Most conversations about AI governance for financial services have not yet reached that question.

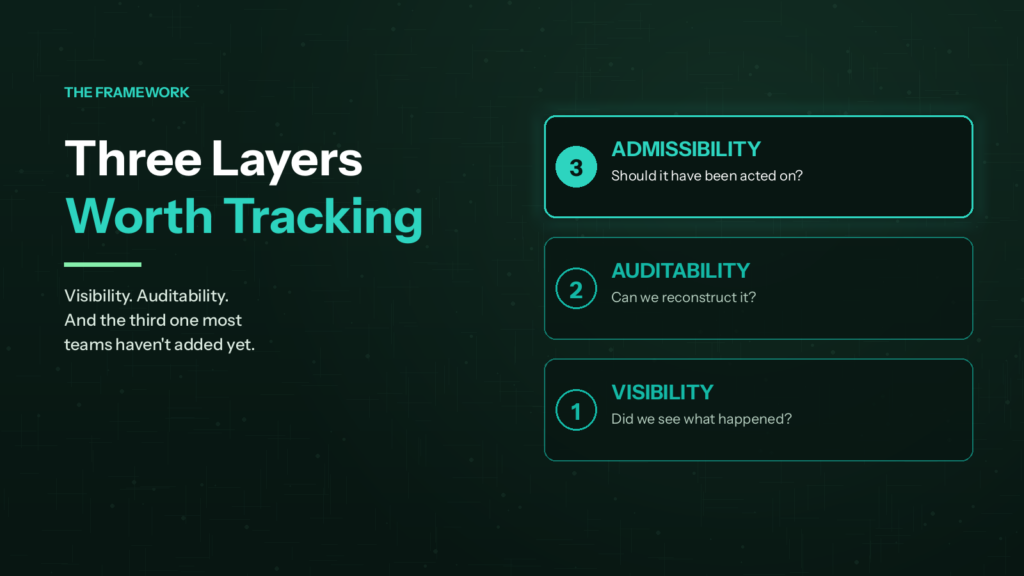

This article explores the layer most teams have not added yet. It sits beyond visibility and auditability. It is admissibility.

What Most Firms Are Already Doing Well

Visibility is improving across most environments. AI activity is being logged. Usage is being tracked. Vendors are being asked tougher questions about how their tools handle data.

Auditability is improving too. Decision paths are being documented. Outputs are being captured. Governance frameworks are maturing.

Both layers are foundational. Furthermore, the work matters and it should continue. Yet a third layer is now worth adding to the conversation.

It does not replace the first two. Instead, it builds on them. Admissibility is the question of whether an AI output should have been acted on at all.

Why AI Governance for Financial Services Now Demands More

Financial services firms operate inside one of the most regulated environments in the economy. As AI moves deeper into client-facing workflows, the stakes climb quickly.

Visibility tells you what happened. Auditability tells you whether you can reconstruct it. However, neither tells you whether the output should have been acted on.

That is a different question. Moreover, in regulated environments, it is increasingly the question that matters most.

A firm can have full activity logs. It can have clean audit trails. It can have explainable outputs. And still operate on AI-generated information that was not appropriate to use for the decision in front of it. As in the example above, output can also be delivered to someone who was not appropriate to receive it.

That is the admissibility gap. Closing it requires a discipline most firms have not yet built.

The Regulatory Ground Has Already Shifted

The direction is now unambiguous. Examiners will assess how firms evaluate AI tools before deployment.

They will also review how firms monitor AI-generated outputs. In addition, examiners will check how firms document human oversight of material decisions.

Earlier enforcement actions established the underlying principle. Firms must substantiate their AI claims, not simply assert them. Representations about how AI is used must match how it is actually used.

Read together, the regulatory posture has shifted. The question is no longer “do you have an AI policy.” Instead, it is “can you demonstrate that AI outputs influencing decisions met a defined standard before they were used.”

That shift is what makes admissibility a control question, not just a conceptual one. Consequently, AI governance for financial services now requires evidence, not just intent.

Where the Discipline Comes From

Finance leaders already apply admissibility standards every day. The discipline operates almost without thinking.

Firms do not admit every data point into financial reporting just because it has been documented. Instead, they apply criteria. Source reliability. Methodology. Completeness. Materiality.

These are evidentiary standards. They are defined before the decision, not after. The audit trail proves what happened. However, the admissibility standard determines whether the evidence is usable in the first place.

That distinction sits at the core of financial control. Likewise, it offers a useful lens for thinking about AI outputs in regulated environments.

What an Admissibility Lens Looks Like in Practice

For most organizations, admissibility is not a new framework to build. Rather, it is a question to start asking. When AI influences a decision, four sub-questions help frame whether the output should be relied on.

Source

Where did the AI’s information come from? Is the source authoritative for the question being asked? Is the data current and complete?

Source questions surface fast. Many AI tools draw from training data, web sources, or internal documents that have not been validated. Therefore, knowing what fed the output is the starting point.

Context

Was the model designed for this kind of decision? Or is it being applied outside its intended use?

Context drift is one of the most common failure modes. A model validated for one purpose often gets repurposed for another without reassessment. Consequently, outputs can carry hidden assumptions that no longer hold.

Materiality

Does the output influence a financial, regulatory, or customer-facing outcome? If so, what is the threshold for human review?

Not every AI output requires the same scrutiny. However, material decisions deserve material oversight. Mapping outputs to materiality is the work most firms still owe themselves.

Decision Class

Is the output feeding an automated, advisory, or final decision? Different classes require different levels of validation.

In our work with regulated firms, decision class is the question most often missed. The instinct is to categorize AI outputs as either fully automated or fully advisory. Yet the most exposed category is the one in the middle.

That middle category includes outputs casually relied on without being explicitly trusted. It is also where regulatory enforcement has been concentrating. Furthermore, it is where most firms have the least documentation.

Why AI Governance for Financial Services Belongs on the Finance Agenda

Admissibility is not primarily a technology question. Instead, it is a control question.

Determining whether evidence is usable for a decision is something finance has done for as long as finance has existed. AI does not change the principle. It expands the set of inputs the principle has to be applied to.

Consequently, this lens fits naturally on the CFO’s desk. Not because finance understands AI better than other functions, but because finance understands what makes evidence usable for a decision.

That perspective is worth bringing to the AI governance conversation. Moreover, it aligns directly with the regulatory expectations now reshaping the industry.

Where to Start Building Admissibility Standards

Most firms do not need to overhaul their governance programs to begin. They need to start asking sharper questions about the AI already operating inside their environments.

First, inventory where AI is influencing decisions. This includes vendor-embedded AI features that may not yet appear in any policy document. The gap between AI usage and AI oversight has widened sharply in regulated industries.

Second, classify each use by decision class. Identify which AI outputs are advisory, which are influencing material decisions, and which are operating without clear ownership.

Third, document the standards that should apply before each output is used. The documentation does not have to be complex. However, it must exist before an examiner asks for it.

For deeper context on the broader compliance environment, our overview of financial services compliance covers how AI fits inside the wider regulatory matrix.

What Comes Next for Regulated Firms

The work on visibility and auditability should continue. Both are necessary. Neither is going away.

What admissibility adds is a way of asking whether the outputs flowing through those systems were appropriate to use in the first place. For the firm in the opening example, the work after the incident was not just patching access controls. It was building the standards that should have been in place before.

Visibility, auditability, and the layer most teams have not added yet. That is the full shape of mature AI governance for financial services in the current environment.

Building these standards is not a one-time project. Rather, it is an operating discipline that grows alongside the AI environment it governs.

How Coretelligent Helps Regulated Firms Close the Gap

Building admissibility standards into a financial services environment requires more than policy documents. It requires the operational discipline to apply those standards before each AI-influenced decision.

Coretelligent works with financial services firms to align AI governance with regulatory expectations. Our cybersecurity and compliance management solutions help firms operationalize the controls regulators now expect to see. In addition, our financial services IT solutions are designed for the regulated environments where stakes are highest.

The path forward starts with an honest assessment. Where does your firm stand today? Which AI outputs are influencing decisions without a defined standard? How quickly could your team produce evidence that those decisions met an admissibility threshold?

For firms ready to add the third layer to their AI governance program, the conversation begins there. To explore how AI governance maturity is evolving across regulated industries, or to discuss your firm’s specific environment, contact Coretelligent today.