Claude has moved well beyond a clever chat window. Teams now use it to search the web, work across very large context windows, analyze structured work, and support more agent-like workflows. That shift matters because governance now has to cover more than prompts. It has to cover access, action, evidence, and accountability. Meanwhile, 78% of organizations reported using AI in 2024, up from 55% the year before. So the real issue is not whether Claude will show up inside the business. It is whether leadership will govern it before usage becomes invisible.

Why Claude Changed the Governance Conversation

The good news is that Claude is adding enterprise controls as its footprint expands. Anthropic’s enterprise plan includes SSO, role-based permissions, and admin tooling, while stating customer conversations and content are not used to train Claude. Recent admin updates added custom roles, group-based controls, spend limits, and the ability to restrict Cowork by department. However, those controls only help when leaders turn them into policy, monitoring, and review. Tool maturity does not remove executive responsibility. It raises the standard for using the tool well.

What makes Claude especially relevant right now is that its use cases are broadening into executive work. Claude’s web search tool now pulls in real-time content with citations. Anthropic is also pushing Claude into financial workflows through a Claude for Excel beta that can read, analyze, modify, and create workbooks while showing the cells it references. That is why this is no longer a pure innovation article. It is a finance, compliance, operations, and technology article.

Claude AI Governance Starts With What Claude Can See

Claude AI governance starts with visibility into what Claude can see. That means files, spreadsheets, prompts, outputs, repositories, connected data sources, and external content. Claude for Enterprise supports very large working context and data integrations. Claude’s web search tool extends that context to live web content. The first governance question is simple. Which categories of information may enter Claude, and under what conditions? That same discipline sits at the center of this strategic AI governance framework and this CFO-ready governance checklist.

That sounds basic. It is not. Once a user can paste a board deck, upload diligence materials, drop in customer data, or connect internal knowledge, the exposure profile changes fast. NIST’s generative AI profile pushes organizations to address trustworthiness, oversight, and risk management for generative AI. So the control question is not whether Claude is useful. It is whether the organization has set clear rules for what Claude may touch before teams build habits around convenience.

Oversharing Is the Quiet Failure Mode

Oversharing is where many rollouts quietly go wrong. Microsoft’s current deployment guidance for Copilot clearly frames the issue. Secure rollout starts with remediation of oversharing, the establishment of guardrails, and compliance with regulatory obligations. Claude deserves the same treatment. That blueprint treats oversharing, enforceable defaults, and regulatory gaps as foundational issues. If access remains loose, users will generate value and exposure simultaneously. CFOs feel that through financial data and board materials. CCOs feel it through supervision and recordkeeping. COOs feel it when shortcuts bypass the process. CTOs feel it when identity and telemetry lag adoption.

Claude AI Governance Also Covers What Claude Can Do

Claude AI governance also has to account for what Claude can do. The Agent SDK can read files, run commands, search the web, and edit code. Claude Code’s security design uses approval gates and read-only defaults for riskier actions. That is useful. Still, it changes the conversation. Leadership is no longer approving a writing assistant. It is approving a system that can influence workflows, research, code, and decision support. Therefore, acceptable use policies need to cover actions, not just content.

That operational shift is where many teams get surprised. More than half of organizations using AI reported at least one negative consequence, and nearly one-third cited inaccuracy as a negative consequence. High performers redesign workflows and define when human validation is required. That finding matters. Productivity without validation creates faster mistakes. In Claude rollouts, those mistakes can show up in financial analysis, compliance narratives, operating procedures, vendor reviews, or engineering decisions. So governance must define where humans review, approve, or challenge outputs before work moves forward.

The Spreadsheet Layer Changes the Stakes

This gets even more important when Claude moves closer to controlled business workflows. Claude for Excel is a good signal of where executive use is heading. Spreadsheet work touches forecasting, modeling, reporting, diligence, and operational planning. Anthropic’s emphasis on transparency is helpful. Yet transparency alone does not create control. Once a tool enters the spreadsheet layer, governance cannot remain with a small innovation group. Finance, compliance, operations, and technology all need to own part of the framework.

The Evidence Gap Is Where Claude AI Governance Usually Breaks

The evidence gap is where Claude AI governance often breaks down. 66% of organizations expect AI to have the biggest cybersecurity impact this year, yet only 37% report processes for assessing AI tools before deployment. That is the gap that auditors, regulators, insurers, and clients eventually notice. A clean Claude policy is not enough. Leaders need a usable record of approved use cases, owners, integrations, identity controls, logging, model changes, and incident steps. That is also why these vendor AI risk questions matter. Once vendors and internal teams both use AI, proof becomes more important than intention.

Evidence matters because governance is broadening, not narrowing. NIST CSF 2.0 puts the Govern function at the center of cybersecurity strategy, policy, roles, oversight, and supply chain risk management. Digital governance research now treats AI, privacy, cybersecurity, and online safety as interconnected rather than siloed. So Claude should not sit in a side conversation owned by one team. It belongs inside enterprise risk, operational controls, and executive reporting. Good governance does not slow innovation. It makes innovation defensible.

Third-party exposure makes the issue even bigger. FINRA’s 2025 oversight report added a new third-party risk landscape topic and new AI-related updates. That is a strong signal for any executive team evaluating vendor controls. If a SaaS provider, outsourcer, or service partner uses Claude behind the scenes, your governance model should require disclosure, data-handling terms, logging expectations, and incident escalation procedures. That is where a sharper vendor AI due diligence model becomes useful.

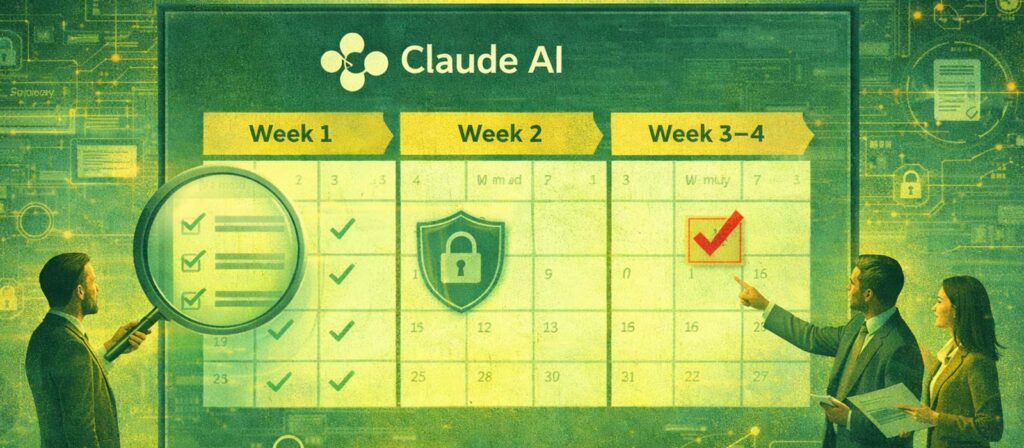

A 30-Day Claude AI Governance Plan

Each executive should ask a different first question. A CFO should ask where Claude touches forecasts, pricing, financial narratives, or investor materials. A Chief Compliance Officer should ask which Claude workflows create recordkeeping, supervision, or notification obligations. A COO should ask where teams rely on Claude to move faster, and what breaks if the output is wrong. A CTO should ask which identities, repositories, APIs, and logs Claude can touch before the next expansion phase. Those questions create ownership faster than generic policy language.

A 30-day Claude AI governance plan works better than a six-month debate. Start by inventorying current Claude use, including unofficial use. Then classify what data may enter the tool and what must stay out. After that, map approved use cases by role, enable the strongest available access controls, and turn on logging and usage review. Next, define validation rules for high-impact workflows. Then update training. That step matters because 83% of IT and business professionals believe employees already use AI at work, while only 31% say their organizations have a formal, comprehensive AI policy. More than 3,000 digital trust professionals found 89% expect they will need AI training within two years.

You do not need a massive committee to begin. A small cross-functional group can move fast if it owns policy, access, training, vendor review, and incident escalation. That committee model already shows up in practical AI governance guidance and in this leadership checklist for CFOs. The key is to give it authority, not just a discussion slot. When governance groups lack decision rights, adoption outruns control.

Govern Claude Before Usage Goes Invisible

This article lands best when the message stays direct. Claude is not risky because it is popular. Claude is risky because it is becoming useful across sensitive work. That is exactly why it deserves leadership attention now. The organizations that win here will not be the ones with the loudest AI story. They will be the ones with the clearest rules, the strongest evidence, and the fastest path from experimentation to governed value. Good digital governance can accelerate innovation rather than block it. That is the posture real leaders should own.