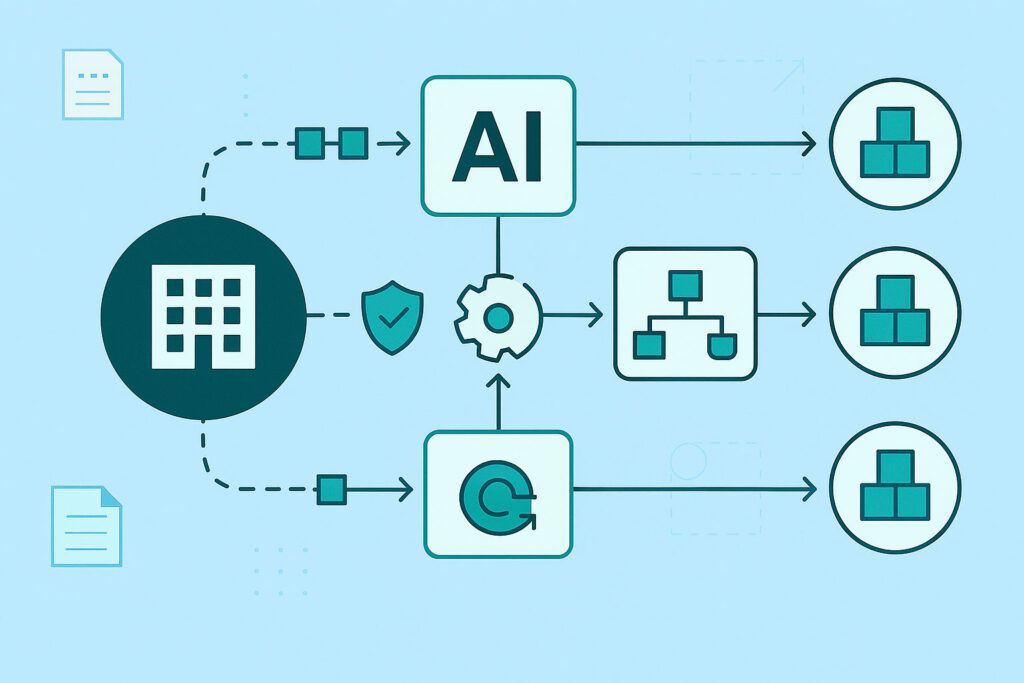

AI has settled into the background of most organizations’ daily operations. It triages information, summarizes decisions, and keeps work moving. Your vendors also rely on AI. Across SaaS platforms, service providers, cloud partners, and security tools, AI is increasingly used to process data and automate workflows. As that reliance grows, your risk moves with it.

In our December Monthly Intelligence Report, we covered Anthropic’s disruption of the GTG-1002 espionage campaign, where attackers used AI to accelerate reconnaissance and credential testing across dozens of organizations. What stood out to me was the sheer speed and scale automation enabled. Clearly, AI now influences risk well beyond internal systems – and your vendor ecosystem is already playing a larger role in business resilience than you may be fully accounting for.

This article focuses on where that exposure tends to surface and the questions that we CFOs need to be asking as AI becomes part of how vendors operate.

Where Vendor AI Creates Exposure

AI inside a vendor’s environment shapes your organization’s exposure in two important ways.

- AI that touches your data – Vendors increasingly use AI to analyze, categorize, or enrich information. That may include financial data, customer information, internal documents, operational records, or communications. This introduces questions around data use, retention, model training practices, and regulatory expectations.

- AI that acts on your behalf – Some vendors now use AI-driven automation to route tickets, adjust configurations, take remediation steps, or interact with APIs. Automation can improve efficiency, but it also raises questions about permissions, oversight, and how quickly a misstep could ripple into operations.

These two dimensions – what AI sees and what AI does – form the foundation of how CFOs should think about vendor-related AI risk.

Five Scenarios CFOs Need to Understand

Vendor AI usage doesn’t need to be malicious to create meaningful downstream cost exposure. Here are five scenarios that reflect patterns we’re already monitoring across real environments.

Data Overexposure and Model Training Practices

A vendor may use your data to improve its models. Even with good intentions, this creates retention concerns, regulatory exposure, and communication challenges. Once data becomes part of a model, unwinding its influence can be exceptionally difficult.

→ Potential financial implications: Unplanned legal spend, remediation efforts, and harder-to-quantify reputation impact.

Over-Permissioned Integrations

AI features sometimes rely on service accounts or API keys with broad access. If these credentials are misused or compromised, the resulting exposure or disruption can be substantial.

→ Potential financial implications: Wider attack blast radius, more systems to investigate, and higher downtime risk.

AI-driven adjustments or recommendations may move quickly without full context. An action taken to resolve an issue can unintentionally weaken a control or interrupt a critical workflow.

→ Potential financial implications: Rollback work, outage windows, and SLA penalties.

Some vendors cannot provide detailed logs that show when AI interacts with your data, what actions it takes, or how certain decisions occur. Limited evidence slows investigations and complicates both regulatory and insurance review.

→ Potential financial implications: Extended investigations, disputed insurance claims, and more conservative regulatory disclosures.

AI-Assisted Attacks Through Vendor Channels

Attackers are increasingly looking for higher-trust pathways. Vendor portals, support channels, and integrations can be attractive entry points, especially with automation accelerating the grunt work of reconnaissance and testing.

→ Potential financial implications: Incidents that are complex to attribute and resolve, which increase both direct costs and scrutiny from auditors and boards.

Understanding these contexts can help you determine which vendor relationships require closer governance.

The Vendor AI Due Diligence Questions Every CFO Should Ask

As a CFO, you need to have confidence in how your vendors use AI so you can appropriately manage that risk. To get the strong answers you need, vendor conversations should include four categories of questions.

To understand scope and reliance, ask:

- Where does AI appear in the services we use?

- What tasks or decisions does AI influence, and which features can initiate workflow actions?

- Which AI models or platforms do you rely on?

These questions surface where AI sits inside the vendor relationship.

Ask vendors to explain:

- What data of ours is processed by AI systems?

- Is any of our data used to train or tune models?

- How is our data isolated from other customers?

- What are your retention practices for AI systems?

These answers influence your regulatory, contractual, and reputational exposure.

3. Security & Governance Questions

For assurance that vendors’ AI usage is managed with structured oversight, ask:

- What access controls govern AI systems and related service accounts?

- Do you log prompts, outputs, or AI-driven events tied to our tenant?

- Which frameworks or internal policies address AI controls?

- How do you track unexpected or unintended AI behavior?

Governance maturity is a strong indicator of operational discipline.

4. Incident Transparency Questions

During an incident, you need confidence in the vendor’s ability to provide timely, specific information. Ask:

- How will you notify us if AI is involved in an incident?

- Can you provide logs, prompts, and outputs associated with AI actions tied to our data or environment?

- Who is accountable on your side for managing AI-related risk?

- What is your process for investigating AI behavior?

Strong incident transparency protects your financial position and informs accurate reporting.

Contracts and SLAs Need to Reflect This New Exposure

If your contracts don’t mention AI explicitly, they were written for a different risk profile. As AI adoption expands, work with your Legal, Procurement, and Security teams to introduce language that addresses:

- Restrictions on model training using your data

- Logging and retention expectations for AI-driven activity

- Notification requirements before enabling new AI features

- Oversight for automated actions taken on your behalf

- Cooperation expectations during investigations

- Clear ownership for AI-related risk

These provisions help ensure all parties understand their responsibilities before issues arise.

Oversight Doesn’t End with Questionnaires

Questionnaires and due diligence reviews provide useful insight, but a complete picture requires ongoing monitoring. Set clear expectations across internal teams that they:

- Track where vendors introduce new AI features

- Watch for changes in permissions or data flows

- Review disclosures during renewals

- Identify vendors with the highest AI exposure

This work often spans Security, IT/Engineering, Legal, and Procurement. Your role is to make sure those teams have direction, budget, and a shared definition of success.

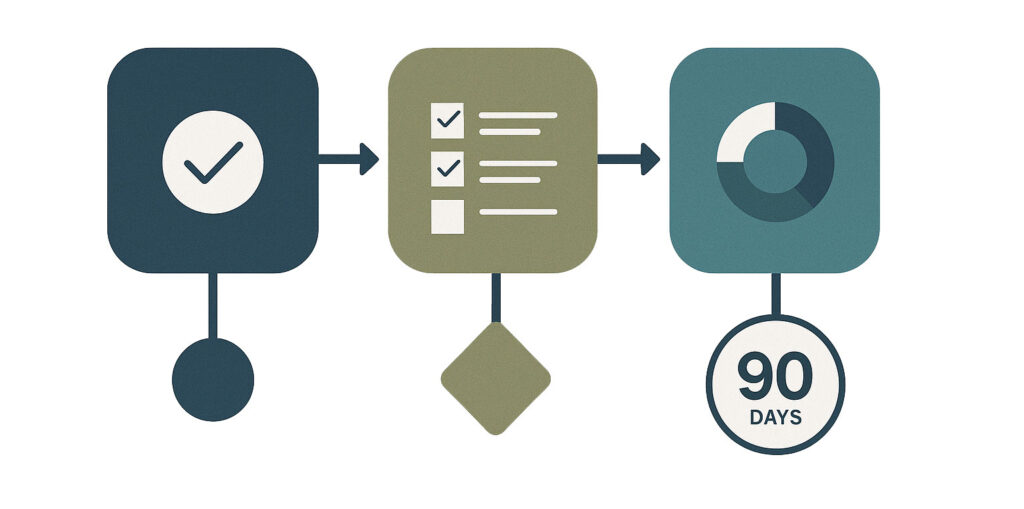

A CFO-Approved 90-Day Plan for Vendor AI Oversight

If your organization is already addressing AI governance internally, these steps can extend that work into the vendor landscape:

- Request AI usage disclosures from your top-tier vendors.

- Ensure your vendor inventory flags where AI appears in services you rely on.

- Work with Legal and Procurement to update contract templates with AI provisions.

- Ask Security and IT to report on monitoring plans for AI-enabled vendor integrations.

- Include vendor AI exposure in leadership and board conversations.

These steps can help reduce uncertainty and support stronger governance in 2026.

Govern Risk Where Value Is Created

AI gives vendors new ways to deliver value and efficiency. It also influences how risk moves through your supply chain. To build resilience, you must understand how AI is being used in the services you depend on – and what that means for your obligations, exposure, and continuity.

If you’d like a structured way to evaluate vendor AI exposure or update your third-party governance program, our team can share the frameworks and questionnaires we use with clients like you.